CI/CD Guardrails: Preventing Friday Deployments

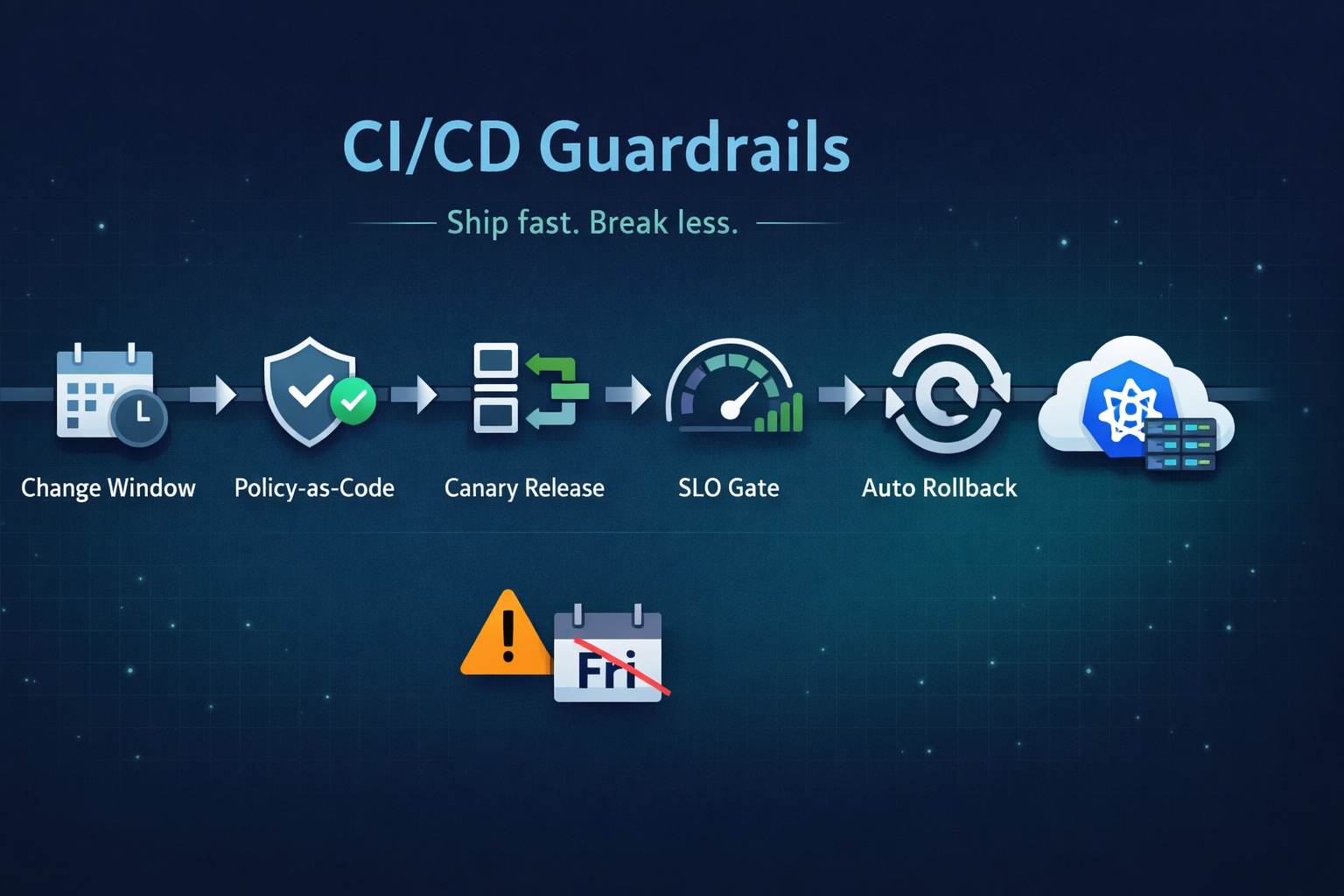

Ship fast without breaking prod. Our 5 guardrails: change windows, policy-as-code, canary releases, SLO-based gating, and automated rollback.

Friday deploys aren’t the problem—unguarded deploys are.

The “no deploys on Friday” rule is a symptom of deeper issues: incomplete testing signals, missing production safety mechanisms, unclear ownership, and pipelines that can ship anything as long as it builds.

The goal of guardrails is simple:

- Make safe changes easy to ship

- Make risky changes hard to ship accidentally

- Detect failures early

- Rollback automatically when needed

Below is the practical playbook we use to prevent weekend incidents without killing delivery velocity.

What a Guardrail Actually Is

A guardrail is not more approvals. It is automation + policy + progressive release that reduces the blast radius of mistakes.

A good guardrail has three properties:

- Objective: based on signals, not vibes.

- Enforceable: the pipeline can block or slow a change automatically.

- Reversible: rollbacks are easy and fast.

Guardrail #1: Change Windows (But Make Them Smart)

Blanket “no Friday deployments” works in orgs that don’t trust their release process. High-performing teams do better with risk-based change windows.

A practical rule set

- Low-risk changes: allowed anytime (including Friday)

- docs, dashboards, feature flags, config-only changes with validation

- Medium-risk changes: allowed during staffed hours

- dependency bumps, infrastructure changes with small blast radius

- High-risk changes: require explicit scheduling and escalation paths

- database migrations, networking/IAM changes, major version upgrades

Implementation pattern

Use a pipeline gate based on:

- calendar (business hours)

- change type (labels in PR or commit scope)

- impact (services affected)

- availability of on-call and rollback readiness

Key point: the pipeline enforces the rules automatically. Humans only intervene for exceptions.

Guardrail #2: Policy-as-Code (Stop Arguing in PRs)

If your “standards” live in tribal knowledge or a Confluence page, you don’t have standards.

Use policy-as-code to enforce baseline requirements on:

- Kubernetes manifests

- Terraform plans

- container build metadata

- security posture and permissions

Concrete examples to enforce

Kubernetes:

- no

latesttags - resource requests/limits required

- health checks required

- no privileged containers

- only approved base images

Terraform:

- no public S3 buckets

- no

0.0.0.0/0inbound rules to sensitive ports - encryption at rest required

- tagging policy compliance

- deny destructive changes unless explicitly acknowledged

Tools that fit

- OPA / Conftest for YAML/Terraform plan evaluation

- Kyverno or Gatekeeper for cluster-side enforcement

- terraform validate + tflint for baseline hygiene

Best practice: run policy checks in CI and enforce in admission control for defense in depth.

Guardrail #3: Build Provenance and Artifact Immutability

Most “mystery incidents” come from one of these:

- the thing you tested is not the thing you deployed

- the artifact was rebuilt later with different dependencies

- environment drift changed runtime behavior

Guardrails here are about traceability.

Minimum bar

- Immutable artifacts: produce a versioned image/artifact once; promote the exact same digest across environments.

- SBOM: generate a software bill of materials.

- Signed artifacts: sign container images.

- Provenance metadata: capture build inputs, commit SHA, builder identity.

This doesn’t just help security—it makes incident response faster:

- “Which build is running?”

- “Did it include that library?”

- “What changed since Wednesday?”

Guardrail #4: Progressive Delivery (Blast Radius First)

If you deploy to 100% of users in one shot, your “guardrail” is hope.

Progressive delivery techniques reduce blast radius:

- Canary: shift 1% → 10% → 50% → 100% while monitoring signals

- Blue/Green: run both versions; switch traffic atomically; instant rollback

- Ring deployments: internal users first, then a small region, then global

The production-grade version

Progressive delivery must be coupled with:

- real-time metrics (error rate, latency, saturation)

- automated analysis and promotion

- automatic rollback when thresholds breach

- alerting that matches the rollout phases

If you can’t rollback automatically, canary doesn’t help. It just fails slower.

Guardrail #5: SLO-Based Release Gates (Quality Signals That Matter)

Passing unit tests rarely correlates with production safety. Your gates should reflect production health objectives.

What to gate on

Pick a small set of high-signal indicators:

- Availability: request success rate for key endpoints

- Latency: p95/p99 latency for critical routes

- Errors: 5xx rate, exception volume, retry rates

- Saturation: CPU throttling, memory pressure, queue backlog

Keep it non-brittle

Avoid gates that flap due to noise. Common tactics:

- compare against baseline (previous stable version) instead of a fixed threshold

- use short windows for fast feedback (2–5 minutes) with smoothing

- include a manual override with an audit trail for rare cases

This is where “Friday deploy fear” dies—because the deployment itself proves it’s safe.

Guardrail #6: Feature Flags with Safe Defaults

Feature flags are a guardrail when used correctly:

- deploy code dark

- enable for internal users

- ramp progressively

- kill switch instantly

Rules we enforce:

- flags must fail closed (safe default state)

- every flag needs an owner and an expiry date

- clean up stale flags (they are operational debt)

Flags are not a substitute for canaries, but they are excellent for minimizing customer impact.

Guardrail #7: Database Change Safety (The Quiet Friday Killer)

Many weekend incidents look like “deployment went fine” and then:

- the app starts timing out

- deadlocks spike

- CPU pegs

- replication lags

- a migration blocks writes

Safer patterns

-

expand/contract migrations

- add new schema (backwards compatible)

- deploy code that supports both schemas

- backfill asynchronously

- cut over

- remove old schema later

-

online migrations (tooling varies by DB engine)

-

read-only rehearsal with production-like snapshots

-

migration runtime limits and lock monitoring

Hard rule: never ship a migration you can’t pause or rollback safely.

Guardrail #8: Automated Rollback (Make It Boring)

Manual rollbacks fail at 2am. Automate them.

What “automated rollback” means

- the deployment controller monitors rollout health

- if SLO gates fail, it reverts traffic to the last known good version

- it pages humans with context, not panic

Combine this with runbooks and one-click redeploy to reduce MTTR.

A Reference CI/CD Guardrail Pipeline

Here’s a simple but effective flow you can adapt:

-

Pre-merge (PR)

- unit + integration tests

- linting + static analysis

- policy-as-code checks (manifests + IaC)

- preview environment (optional but powerful)

-

Merge → Build

- build artifact once

- generate SBOM

- sign artifact

- store metadata (commit SHA, build ID, digest)

-

Staging

- deploy same artifact by digest

- smoke tests + synthetic checks

- soak window (optional)

-

Production

- enforce change window by risk

- canary or blue/green rollout

- SLO gating + automated analysis

- auto-rollback on failure

- alerting and release notes

If you do only one thing this quarter: start promoting immutable artifacts by digest. It eliminates a surprising amount of release chaos.

Measuring Whether Guardrails Work

Don’t judge guardrails by “fewer deploys.” Judge by outcomes:

- fewer Sev-1 incidents caused by releases

- lower change failure rate

- faster rollback time

- improved MTTR

- higher deploy frequency (yes, higher)

In mature systems, guardrails increase delivery speed because they reduce fear.

The Bottom Line

Banning Friday deployments is an organizational coping mechanism.

Guardrails are an engineering solution.

If your pipeline can:

- enforce policy automatically,

- release progressively with real signals,

- and rollback on its own,

then Friday becomes just another day to ship.

Quick Checklist (Copy/Paste)

- Change windows enforced by risk, not superstition

- Policy-as-code in CI + admission control

- Immutable artifacts promoted by digest

- SBOM + signing + provenance metadata

- Progressive delivery (canary/blue-green)

- SLO-based release gates

- Feature flags with owner + expiry

- Safe DB migration patterns (expand/contract)

- Automated rollback + actionable alerts

If you want, tell me what stack you’re running (GitHub Actions/GitLab, Kubernetes/ECS, Argo Rollouts/Flagger, Datadog/Prometheus), and I’ll tailor these guardrails into a concrete implementation plan.

Tagged with

Need help with your infrastructure?

Book a free architecture review and get expert recommendations.

Book Architecture ReviewCloud Infrastructure & DevOps

Read Next

Migrations don't fail because of K8s; they fail because of assumptions. From OOMKills to 'flat network' traps, here are the technical reasons migrations blow up.

Stop clicking in the console. Learn the 5 non-negotiable best practices for scaling your Infrastructure as Code using Terraform.

From the three-repository pattern to progressive delivery with Argo Rollouts. Real-world GitOps architecture that eliminates drift and provides audit trails.